AI triggers efficiency boost in photonics

Artificial intelligence (AI) is changing value creation in photonics and its target markets. A SPECTARIS conference in Berlin in early October 2025 highlighted important development trends.

Many industrial users share the same goal when it comes to AI use: close-meshed, preferably 360° quality monitoring. Today's manufacturing processes are characterized by material diversity, high quality requirements, and complex components and assemblies whose value in the process chain is rising sharply—all amid growing time and cost pressures. Compared to a car door with around 70 weld seams, where production errors cause damage of barely 100 euros, batteries or fuel cell stacks have hundreds of welded joints. Errors are taboo, because at the time of the welding processes, these are already worth several thousand euros, as Martin Stambke, TRUMPF product manager for sensor technology, reported. Dr. Jan-Phillip Weberpals, AUDI expert for laser beam processes, sensor technology, and machine learning, and Konstantin Ribalko, key account manager at Precitec, described similar issues at the conference: “When connecting cells in battery modules, despite the wide range of material thicknesses and welding depths, not a single one of the hundreds of weld seams per component must be defective.”

Process understanding, systematic quality control, and data linking

Systematic quality control is intended to minimize this costly waste. Manufacturers monitor production processes using camera and OCT systems, emission-based sensor technology, 3D imaging via triangulation, and even computer tomography. This is costly, generates vast amounts of data, and paves the way for AI. Industry representatives in Berlin outlined their hopes for the use of AI. To prevent errors, AI is to optimize parameter configurations for processes in which AI-supported image processing detects errors in real time, thus minimizing the need for expensive non-destructive testing. In the future, AI solutions are to readjust ongoing manufacturing processes. The goal is first-time-right production.

Prof. Carlo Holly, head of the RWTH Chair of Optical Systems Technology and head of the Data Science and Measurement Technology department at Fraunhofer ILT, outlined how this goal can be achieved in his presentation. The path leads from data-informed machine learning to data & physics-informed machine learning. “Pure language models that extract physical content from language alone—i.e., only learn from language—often fall short in photonics because we usually already have explicit knowledge about the physics of the processes,” he explained. Ignoring this and starting from scratch is a detour. He therefore advocates direct interaction between the models and the real physical world. This, he said, is the methodical path to autonomous, self-learning laser systems for materials processing.

The AI revolution has long since begun

According to Holly, this process consists of four stages. It began with design and modeling using multi-physical models, ray tracing, and CAD tools, which are increasingly supported or optimized by AI in areas such as optical design and component design. The second stage involves process monitoring and quality inspection. However, inline monitoring only provides retrospective error messages and data on physical deviations. What is needed, however, are predictions. The third stage therefore involves forecasts based on an in-depth understanding of the causes of errors. “At this point, stage four—corrective active intervention in the process—is close at hand,” he explained. Data science provides photonics with very powerful tools that can be applied to many photonic processes. From this perspective, the future of AI-supported photonic manufacturing has long since begun.

The manufacturing world still looks different. Companies generate large amounts of data, but this often ends up unused in data silos or is evaluated with little insight gained. Causalities remain unclear, error assessments subjective, while testing efforts and process qualification spiral out of control. AI is needed to channel the flood of data in a meaningful and beneficial way. But it's not a genie out of the bottle. AI implementation is a complex strategic and interdisciplinary task. The first step is to manage the coexistence of centralised and decentralised IT infrastructure, cloud services and heterogeneous databases across locations in order to extract value-added information from raw data. “The more data you make available for your AI models, the better and more realistic they become,” says Microsoft expert Stephan Kiene. It is a self-reinforcing process. The data platform is becoming a key driver of innovation for companies. AI implementation requires a well-thought-out data strategy.

High complexity in photonics

This applies across all industries. In photonics, heterogeneous methods and processes that are often only understandable to experts are added to the mix. Applications are so specific that pre-trained models and often also the data for training the AI are lacking. They usually have to be generated by the user. This is where pitfalls lurk. Thomas Koschke from BCT Steuerungs- und DV-Systeme and Max Zimmermann from Fraunhofer ILT reported on a project in which they trained AI for the parameterization and control of robotically assisted laser metal deposition (LMD) processes. “To achieve good results, you have to set many parameters, which often interact with each other. If the feed rate and laser power are not matched, there is a risk of overheating or irregular powder melting,” said Koschke. Testing all variants for optimal parameterization would be too time-consuming. Therefore, AI should help with process setup and then also serve for inline process monitoring.

Generating the data that the AI needed to learn how to detect errors and control the LMD process proved difficult. Although BCT software correctly assigned images, temperature, laser power, voltage, and other sensor data to the component topology, a problem arose when the data was processed into uniform formats, scales, and scales: the timestamps were incorrect. However, when preparing the data in uniform formats, scales, and measurements, a problem became apparent: the time stamps were incorrect. Only gradually did the team discover the causes: minor discrepancies in sensor configurations or a misaligned nozzle. “Every detail counts, even during data collection,” Koschke warned. “Before starting to build the data model with in-situ and ex-situ data from metallographic analyses or CT scans, all discrepancies must be clarified,” added Zimmermann. Only when all data is precisely assigned in terms of time and space can the full potential of AI be exploited. Using the human-in-the-loop approach, the team was finally able to create the database that allowed the AI solution to mature. The result is more homogeneous structures in the LMD process. “With data-based predictions, we build more cleanly, get closer to the target geometries, and have more stable processes,” Zimmermann reported.

The next step is sensor fusion

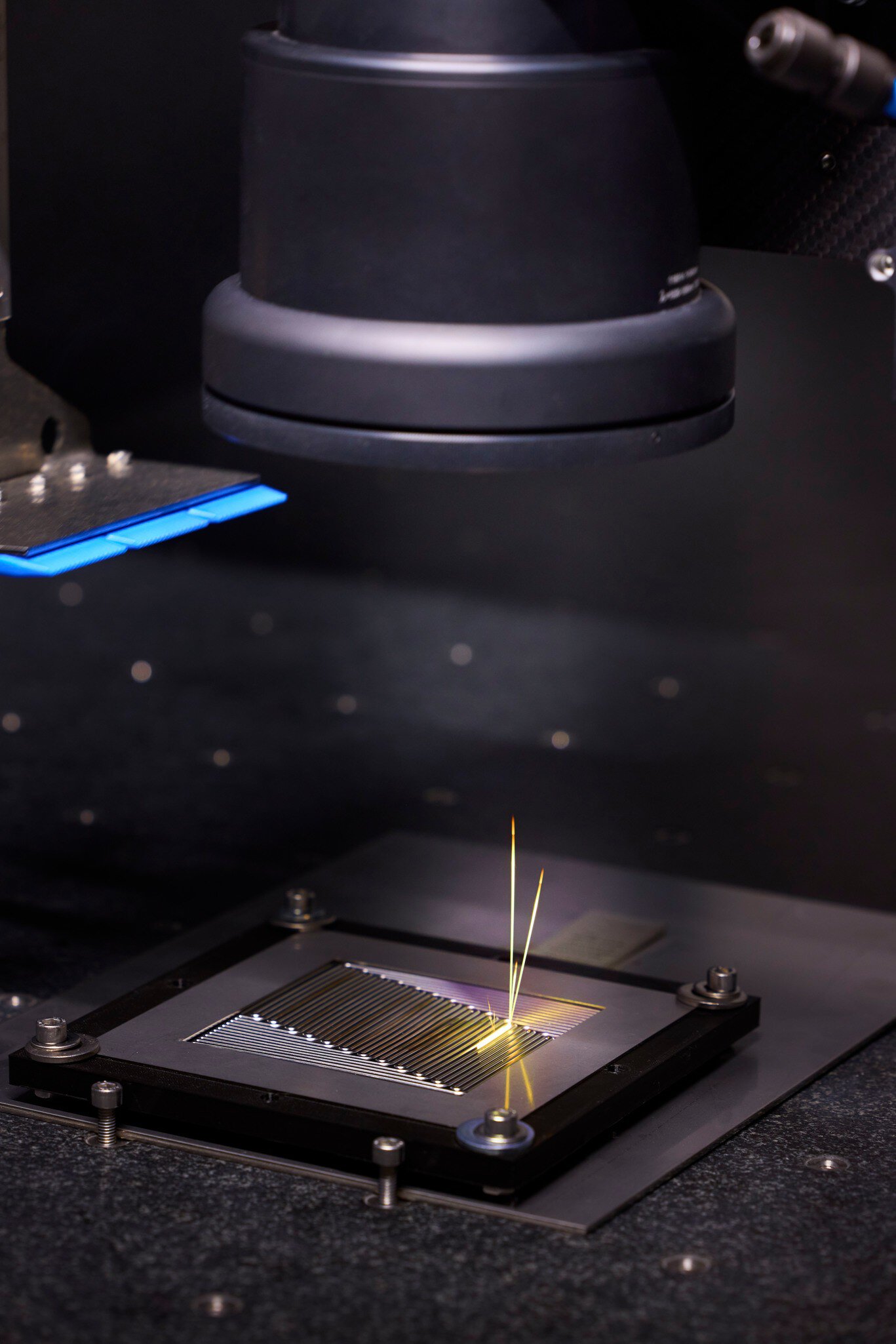

Christoph Franz, managing director of 4D Photonics GmbH, presented a solution in Berlin that simplifies the generation of the database with numerous sensors—and is designed to immediately tag the data with precise time stamps. The goal is full traceability in all conceivable laser applications, from welding and cutting different materials to cleaning, laser ablation, and additive processes. The solution is a fist-sized sensor cube with over 42 individual channels for easy sensor fusion. “The aim is to generate as much process data as possible: process emissions on the workpiece, laser power, high-resolution spectral and temporal measurements of the emitted wavelengths in order to detect errors,” he explained. Hardware on the sensor side is the key to making AI usable for quality control. To prevent the heterogeneous sensor data from drifting apart, the 4D sensor provides it with precision time protocol stamps at 10-nanosecond intervals. Analog and digital inputs are synchronized. According to, individual sensors thus form a high-precision information network in which no process information is lost and every anomaly is documented with a timestamp. In addition, there is software that visualizes components, simplifying comparative analyses of weld seams, for example. In other words, it is a multi-tool for sensor fusion that is already being used for process monitoring in battery production. 4D Photonics is working on various research projects with partners from industry and research to make the solution usable for AI-monitored processes. It will then be used, for example, in welding processes to automatically detect pores, blistering, unstable keyholes, or spatter and ejection. The 4D solution has already proven its potential in a series of experiments at the German Electron Synchrotron DESY, where ongoing welding processes were examined using hard X-rays.

“Synchronization of data is absolutely crucial. I would recommend this to anyone who wants to use AI,” emphasized Franz. However, with precise synchronization, sensor fusion is the key approach to significantly increasing the reliability and significance of AI analyses and, in particular, error classification.

AI in series production

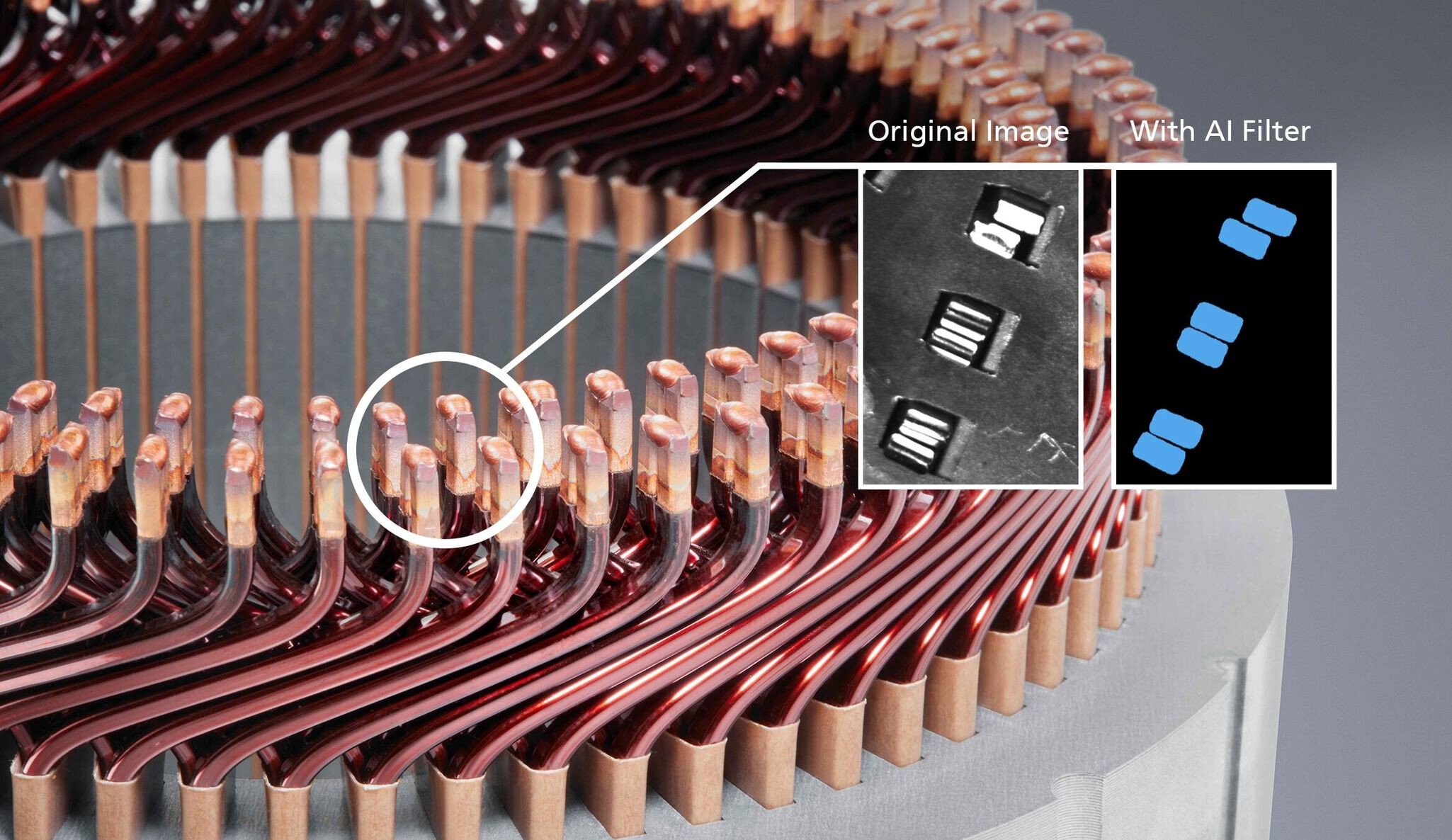

TRUMPF expert Stambke reported on AI series applications in Berlin. TRUMPF relies on user-centered solutions to demonstrate the potential to manufacturing employees without AI experience and convince them of the added value. Image processing and its algorithms have so far reached their limits in photonic manufacturing. This also applies where laser hairpins connect in electric motors. The copper surfaces reflect incident light very strongly. Varying part quality further complicates imaging with gray value algorithms. However, aligning the laser spots, which are only 50–500 µm in size, requires very precise position information about the hairpins. Neural networks provide this information. An “AI filter” separates the component from the background based on semantic segmentation: it reduces the image to a binarized black-and-white image in which the grayscale algorithm reliably recognizes hairpins. “We create robustness by separating the component from the background and filtering out interference,” says Stambke. Tests on 9,500 pairs of hairpins prove it: the combination of the gray value algorithm and AI filter resulted in a first-pass yield of 99.8 percent. The missing 0.2 percent was actually due to faulty pairs. This is good news for anyone who has to perform increasingly complex manufacturing tasks on increasingly valuable components: AI significantly reduces the error rate—and ensures that defective parts do not even enter the welding process in the first place.

TRUMPF is also driving forward AI solutions based on multi-sensor systems. This is also the case when welding bipolar plates in fuel cell stacks. Joining the plates made of high-alloy steel is challenging due to their complex geometry, material stresses, and film-like thickness of only 75–100 µm. The task is to apply several meters of absolutely tight seams per plate for several hundred pieces per stack. “If just one connection leaks, the entire stack is unusable,” explained Stambke. The required 100 percent inspection takes two to three minutes. This is not practical in series production. TRUMPF therefore relies on AI-supported, multi-sensory process control. This requires many sensor signals to be merged into a coherent quality statement. The combination of a high-frequency short-wave IR camera and a microphone in conjunction with AI has already shown in tests that it detects leaky seams very well. Bipolar plates that the system found to be tight were tight. False alarms are at the same level as previously used, significantly more complex measurement methods. It could therefore be that AI and photonics will soon trigger a productivity boost in fuel cell production.